Search engines are tools that find and rank web content matching a user’s search query.

Each search engine consists of two main parts:

Search index. A digital library of information about web pages.

Search algorithm(s). Computer program(s) that rank matching results from the search index.

Examples of popular search engines include Google, Bing, and DuckDuckGo. (ahrefs.com)

How do search engines order results?

To be effective, search engines need to understand exactly what kind of information is available and present it to users logically. The way they accomplish this is through three fundamental actions: crawling, indexing, and ranking.

Search engines use algorithms to sort the results and try to place the links which are most useful to you at the top of the page.

PageRank is the best known algorithm which is used to improve web search results. In simple terms, PageRank is a popularity contest.

The more links that point to a webpage (see also type of links), the more useful it will seem. This means it will appear higher up in the results. (bbc.co.uk)

How Search Engines Crawl, Index, and Rank Content?

Search engines look simple from the outside. You type in a keyword, and you get a list of relevant pages. But that deceptively easy interchange requires a lot of computational heavy lifting backstage.

To make sure that the algorithms are doing their job properly, Google uses human Search Quality Raters to test and refine the algorithm. This is one of the few times when humans, not programs, are involved in how search engines work.

Crawling

Search engines rely on crawlers automated scripts to scour the web for information.

Crawlers visit each site on the list systematically, following links through tags like HREF and SRC to jump to internal or external pages.

Over time, the crawlers build an ever-expanding map of interlinked pages.

Links: Use internal on every page. Crawlers need links to move between pages. Pages without any links are un-crawlable and therefore un-indexable.

XML sitemap: Make a list of all your website’s pages, including blog posts. This list acts as an instruction manual for crawlers, telling them which pages to crawl.

There are plugins and tools like SEOPressor and Google XML Sitemaps that will generate and update your sitemap when you publish new content. (blog.alexa.com)

Indexing

After finding a page, a bot fetches (or renders) it similar to the way your browser does. That means the bot should “see” what you see, including images, videos, or other types of dynamic page content.

Bots “are blind “, also if they find an image without ALT Text, they “will not see it”. Meaning that you missed the chance to rank that image therefore your web-page

The bot organizes this content into categories, including images, CSS and HTML, text and keywords, etc. This process allows the crawler to “understand” what’s on the page, a necessary precursor to deciding for which keyword searches the page is relevant. (blog.alexa.com)

Ranking

Google is leveraging a whole series of algorithms to rank relevant results. Many of the ranking factors used in these algorithms analyze the general popularity of a piece of content and even the qualitative experience users have when they land on the page. These factors include:

“Freshness,” or how recently content was updated

Conclusion

Once Bots and Users can “read” your website without issues, you then need to ensure that you give them the right signals to help their search ranking algorithms.

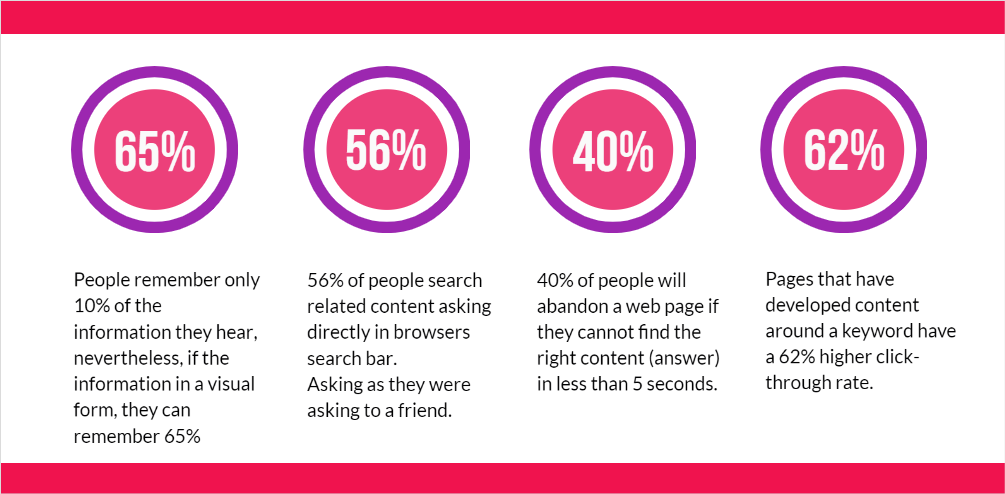

And never forget that Content Matters!